Today’s post has nothing to do with travel technology, so click here if that’s what you came here for. On the other hand, if you’d like a heartwarming tale of triumph over Kafkaesque bureaucracy, or a step-by-step guide to bypassing obstructionist police departments in your quest for the fingerprints needed for a Singaporean Certificate of Clearance, read on.

For the past half year, I’ve been immersed in the soggy bucket of fun known as an application for permanent residence in Australia. Now, I like to think of myself as somewhat of a connoisseur of obscure immigration bureaucracy, my passports being littered with Saudi work permits, Indonesian multiple-entry business visas and Japanese trainee landing permits, but nothing I’ve seen yet comes close to the sheer bulk and complexity of the Employer Nominated Sponsorship (Subclass 856), recently renamed ENS 186 just to keep us pesky migrants on our toes. The checklist for what to include alone runs to five pages. By the time we finally lodged our application in May, it had grown to a wodge of 74 pages, and that was just our half, with my employer (thanks Lonely Planet!) submitting another lot of the same size.

For the past half year, I’ve been immersed in the soggy bucket of fun known as an application for permanent residence in Australia. Now, I like to think of myself as somewhat of a connoisseur of obscure immigration bureaucracy, my passports being littered with Saudi work permits, Indonesian multiple-entry business visas and Japanese trainee landing permits, but nothing I’ve seen yet comes close to the sheer bulk and complexity of the Employer Nominated Sponsorship (Subclass 856), recently renamed ENS 186 just to keep us pesky migrants on our toes. The checklist for what to include alone runs to five pages. By the time we finally lodged our application in May, it had grown to a wodge of 74 pages, and that was just our half, with my employer (thanks Lonely Planet!) submitting another lot of the same size.

Perhaps the most pointless hoop to jump through was proof that I possess a “vocational ability” in the English language; in other words, that my English is good enough to work in Australia. Now, given that the ENS 856 is an employer-sponsored visa, you’d think the letter from my employer confirming that they’ve tolerated my antics for over two years and are willing to try to put up with them for another three would suffice, but no cigar: for the Descartesian philosophers at the Department of Immigration & Citizenship (DIAC), it’s not enough to possess practical ability, I have to demonstrate it in theory as well. Moving to the United States at the age of 8 months? Completing the entirety of my education, including a master’s thesis, in English? Nope, nyet, nein: I could either get a combined score of over 5.0 “Modest User” on the IELTS or go pound sand. So I forked out several hundred smackaroos, prepared yet another stack of paperwork (fun fact: IELTS photos are identical to Aussie photo guidelines, except that glasses are not allowed), spent two days to apply for and complete the test, and eventually received my results: 9.0 “Expert User”, the maximum score. I can only presume somebody somewhere is getting a juicy kickback from all this.

But like the boss in an old console game, the toughest obstacle of all awaited at the end of the grueling journey. The application requires submitting criminal record checks for all countries you’ve lived in the past ten years, and while Australia, Japan and Finland proved no great problem, for Singapore this requires a Certificate of Clearance (COC). As 99% of people requesting this are Singaporeans wishing to leave the ordered shores of the Little Red Dot for good, you are only permitted to request this once in possession of a “document from relevant consulate/immigration authority/government bodies to establish that the certificate is required by such authority“, and the form goes to great lengths to ask why you wish to commit this near-treasonous act.

And if you’re in the 1% who are not Singaporean? Tough luck: since October 2010, the Garmen decided to score political points and stop cuddling non-citizen scum, so no COC for you. However, in their grandmotherly kindness the Criminal Investigation Department has instituted an appeals process, allowing non-citizens to rend their garments, sprinkle ashes on the head and wail for “exceptional, case-by-case basis” permission to apply for a COC — and fill out another form, of course.

Now, filling out a form or two is no great shakes, Lord knows we’ve had plenty of practice recently. However, there are two other requirements: the first, “a bank draft made payable to ‘Head Criminal Records CID ’ through a Singapore-based bank“, and the second, a full set of fingerprints.

For the bank draft, the usual approach is to waltz over to the HSBC and OCBC offices in Melbourne and try to convince them into write you an international bank draft for 50 Sing dollars at some stupid markup. (Apparently HSBC will do it for A$18 if you have an account with them.) Being a lazy cheapskate in possession of a Singaporean bank account, though, I worked out another way: order a free DBS iB Cheque online, mailed to Head Criminal Records CID, c/o My Buddy in Singapore and forwarded by him to Australia. There’s only one catch: the cheque is valid for precisely one month, so you need to make sure everything else is lined up… including those fingerprints.

Now, in most sane countries, getting a set of prints done would involving rocking up to the nearest cop shop with a box of donuts in hand and walking out 15 minutes later wiping ink off your grubby fingers. Unfortunately, this is a former part of the British Empire we’re talking about, and Australia has inherited the colonial bureaucracy with gusto, but unlike still triplicate-filing India it’s all been upgraded to high-tech bureaucracy. Here in the great state of Her Majesty Victoria, Defender of the Faith, the police scan and store fingerprints electronically, no ink needed or allowed, which means that unless you’re on really good terms with the local constabulary, they can’t do squat. (As it happens, Singapore also does its fingerprints electronically, but getting their machines to talk directly to Victoria’s machines would obviously be pure crazy talk.) For dealing with the rest of the world, there is a central Fingerprinting Service in Melbourne that can take your prints the old way, so one sunny morning in April I called them up and asked for the next available slot — which would be in October.

Now, in most sane countries, getting a set of prints done would involving rocking up to the nearest cop shop with a box of donuts in hand and walking out 15 minutes later wiping ink off your grubby fingers. Unfortunately, this is a former part of the British Empire we’re talking about, and Australia has inherited the colonial bureaucracy with gusto, but unlike still triplicate-filing India it’s all been upgraded to high-tech bureaucracy. Here in the great state of Her Majesty Victoria, Defender of the Faith, the police scan and store fingerprints electronically, no ink needed or allowed, which means that unless you’re on really good terms with the local constabulary, they can’t do squat. (As it happens, Singapore also does its fingerprints electronically, but getting their machines to talk directly to Victoria’s machines would obviously be pure crazy talk.) For dealing with the rest of the world, there is a central Fingerprinting Service in Melbourne that can take your prints the old way, so one sunny morning in April I called them up and asked for the next available slot — which would be in October.

Yes, October. That’s a six month wait to get somebody to press your fingers first onto an ink pad, and then onto a sheet of paper. Why? According to the Department of Immigration, apparently mostly because the police want more money, and have thus been on strike since September 2011. Until recently, you could short-circuit this by taking a trip upcountry to Wangaratta or Whoop Whoop, where waiting times are more like two weeks, but apparently Vic Police got wind of this and now require proof of residence.

Looking for an alternative? There’s precisely one, namely the Australian Federal Police, who unlike the locals charge $145 a pop for the privilege — and still have queues out the door, with the next slot in September, a mere 5 months away.

Not being the kind of guy who takes “no” for an answer, I considered a few ways to shortcut this. The obvious one would be to lie, book a slot outside Melbourne and fake proof of residence (a referee statement would be easy enough), but lying to the police is in general bad juju. Camping at the fingerprinting centre, hoping to snag the slot of a no-show would be another option, but you might be in for a long wait and they certainly don’t seem too cooperative over there. And then there are some companies that do stuff like forensic fingerprinting, but this seems a bit beyond their remit.

But then I heard about getting a set of fingerprints notarized, and I decided to give Frank Guastalegname of Caleandro, Guastalegname & Co in Footscray a ring. Now, the COC requirements state that the prints must be “taken by a qualified Fingerprint Officer at a Police Station or an authorized office of the country he/she is now residing“, and a public notary is not going to take your fingerprints for you; however, Frank was more than happy to witness us fingerprint ourselves. So we printed out a bunch of FBI Form FD-358s, brought them along our passports, and self-fingerprinted away. With no practice beforehand, the prints came out pretty sloppy, so I would suggest training a bit beforehand: here’s a handy video on Youtube. Frank then stapled the fingerprint sheet to a grand declaration confirming that he had personally witnessed our identity documents and fingerprints, and then signed, stamped and sealed the hell out of the result. (I’m kicking myself for not scanning a copy for posterity, it was a sight to behold.) Total cost for the two of us? $60. Whoo!

So here’s what we sent for the application and appeal, with props to PMD:

1. Application for COC (PDF)

2. Appeal for COC request (PDF)

3. iB cheque for S$100 (for two of us)

4. Notarized fingerprints

5. DIAC information request letter (DIAC has a template for this and will provide a copy on request)

6. Two passport photos

7. Copies of passport, including front page and stamps showing first arrival in Singapore, Employment Pass in Singapore plus departure from Singapore

8. Employment letter(s) while working in Singapore

We initially sent 1 through 6 plus copies of current passports, but they do insist on 7 and 8 as well and sent an e-mail asking for scans, since apparently the police computers don’t talk to the immigration computers. They also understand that PRs don’t get stamps and modern EPs don’t even go in your passport. So don’t worry too much if you’re not quite sure what you need to send, they’ll let you know if you missed something.

Just under a month after they received our application, Singapore finally gave the thumbs-up and mailed out the certificates on June 19 by registered mail. Singapore being Singapore, the confirmation included a SingPost tracking number, which duly told us the letter had been shipped off to Kangarooland — and that’s where the trail ended.

Just under a month after they received our application, Singapore finally gave the thumbs-up and mailed out the certificates on June 19 by registered mail. Singapore being Singapore, the confirmation included a SingPost tracking number, which duly told us the letter had been shipped off to Kangarooland — and that’s where the trail ended.

It usually takes about one week for mail to get from Singapore to Australia, but that “usually” is dependent on the tender mercies of Australia Post, an organization with all the efficiency and panache you’d expect from a government-owned corporation with a monopoly. Whenever our mail doesn’t show up and we go complain, the local office blames our crack-addled local delivery guy, who does indeed have a demonstrable habit of delivering our mail to somebody else or somebody else’s mail to us on a weekly basis, and also likes to leave us cards saying a package (with no indication of sender, contents or any tracking ID) is at post office A when it’s actually at post office B. But I’m pretty sure it’s somebody else along the chain who screwed the mail pouch when a letter takes over a month to actually show up, and on occasion they simply disappears into the ether.

We chewed on what remained of our frayed fingernails and waited, with the occasional ping to Immigration for updates. On July 27th, a good six weeks later, they emailed to say that they hadn’t gotten the COC yet, so could we have Singapore mail out another one? I sent another email to the CID, with a CC directly to our DIAC case officer, and lo and behold: she took another look, found the certificate hiding under a snowdrift of paperwork, and half an hour later, we were Permanent Residents!

And that’s how a “skilled migrant” from a 1st world country with a supportive employer won his family’s right to stay in Australia. Spare a thought for the bewildered refugee with no support, who speaks English as his third language, and has to battle not only with DIAC’s impenetrable but basically fair bureaucracy, but with a venal and corrupt administration (or lack thereof) in his home country as well. Anybody want to try their luck getting a police certificate from Eritrea or the Democratic Republic of Congo?

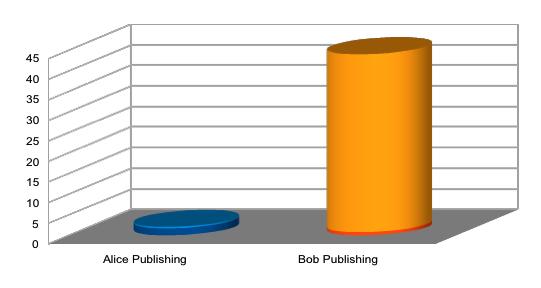

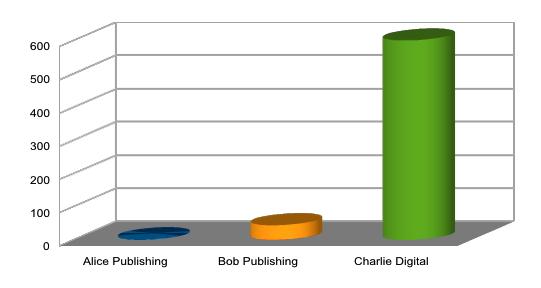

The Wikimedia Foundation, the non-profit organization behind Wikipedia, has today officially announced that they will proceed with the creation of a Wikimedia travel guide. This follows the overwhelming support expressed during the public comment period, with 542 in favor versus 152 against, and the community behind the original travel wiki, Wikitravel, has already regrouped at Wikivoyage in preparation for joining the Wikimedia project.

The Wikimedia Foundation, the non-profit organization behind Wikipedia, has today officially announced that they will proceed with the creation of a Wikimedia travel guide. This follows the overwhelming support expressed during the public comment period, with 542 in favor versus 152 against, and the community behind the original travel wiki, Wikitravel, has already regrouped at Wikivoyage in preparation for joining the Wikimedia project. The end goal is thus that the content and communities from both Wikitravel and Wikivoyage will become Wikimedia Travel, strong and vibrant under a host that shares the ethos and has the technical capability and other resources to maintain it. As an inevitable side effect, Wikitravel the site will die a slow and lingering death.

The end goal is thus that the content and communities from both Wikitravel and Wikivoyage will become Wikimedia Travel, strong and vibrant under a host that shares the ethos and has the technical capability and other resources to maintain it. As an inevitable side effect, Wikitravel the site will die a slow and lingering death.