On July 11, 2012, the Wikimedia Foundation of Wikipedia fame made a decision that has been a long time coming: they decided to support hosting a new wiki devoted to travel, populated with Wikitravel content and, most importantly, the community that built Wikitravel. It’s not a done deal yet, as the decision has to be confirmed by public discussion, but as it’s looking pretty good so far; and if it comes true, this second shot at success is almost certain to result in the new gold standard for user-written travel guides, in the same way that Wikipedia redefined encyclopedias.

On July 11, 2012, the Wikimedia Foundation of Wikipedia fame made a decision that has been a long time coming: they decided to support hosting a new wiki devoted to travel, populated with Wikitravel content and, most importantly, the community that built Wikitravel. It’s not a done deal yet, as the decision has to be confirmed by public discussion, but as it’s looking pretty good so far; and if it comes true, this second shot at success is almost certain to result in the new gold standard for user-written travel guides, in the same way that Wikipedia redefined encyclopedias.

Let me start by making it clear that this is a personal blog post that does not claim to represent the view of all 72,000+ Wikitravellers out there, much less the Wikimedia Foundation. I’ve played little role in and claim no credit for making this fork (legal cloning) happen, and my present employer Lonely Planet has nothing to do with any of this. However, as a Wikitravel user and administrator since 2004, who has done business with Wikitravel’s current owner Internet Brands and seen first hand how they operate, I’ll take a shot at answering three questions I expect to be asked: why the fork is necessary, whether the fork will succeed, and how Internet Brands will react.

First, a quick history recap. Founded in 2003 by Evan Prodromou and Michele Ann Jenkins as a project to create a free, complete, up-to-date and reliable world-wide travel guide, Wikitravel grew at an explosive pace in its initial years and seemed on track to do to printed travel guides what Wikipedia had done to encyclopedias. But in 2006, with ever-increasing hosting and support demands and no money coming in, the Prodromous made the decision to sell the site to website conglomerate Internet Brands (IB), best known at the time for selling used cars at CarsDirect.com.

First, a quick history recap. Founded in 2003 by Evan Prodromou and Michele Ann Jenkins as a project to create a free, complete, up-to-date and reliable world-wide travel guide, Wikitravel grew at an explosive pace in its initial years and seemed on track to do to printed travel guides what Wikipedia had done to encyclopedias. But in 2006, with ever-increasing hosting and support demands and no money coming in, the Prodromous made the decision to sell the site to website conglomerate Internet Brands (IB), best known at the time for selling used cars at CarsDirect.com.

IB made many promises at the time to respect the community, keep developing the site and tread carefully while commercializing it. The German and Italian wings of Wikitravel didn’t believe a word it, so they rose up in revolt and started up Wikivoyage, the first fork of Wikitravel, which did successfully supplant the original for those two languages. But the rest of us, including myself, opted to give IB a chance and see how things turned out.

Now to give Internet Brands credit where credit is due, it could have been considerably worse. They’ve kept the lights on for the past 5 years, although overloaded or outright crashed database servers often made editing near-impossible. They have respected the letter of the Creative Commons license, if not the spirit, as from day one they have refused to supply data dumps. And they grudgingly abandoned some of their daftest ideas, like splitting each page into tiny chunks for search-engine optimization, after community outcry. On a personal level, I also dealt with IB while running Wikitravel Press, and while they could be a tough negotiating partner, whatever they agreed on, they also delivered.

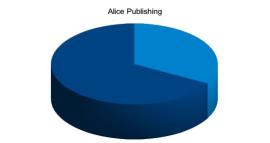

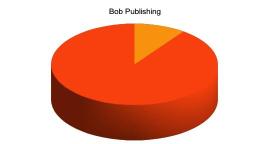

What they did not do, though, was develop the site in any way that did not translate directly into additional ad revenue. The original promise to restrain themselves to “unobtrusive, targeted, well-identified ads” soon mutated into people eating spiders and monkey-punching Flash monstrosities, with plans to cram in a mid-page booking engine despite vociferous community opposition. Once Evan & Michele were kicked off the payroll, bug reports stayed unattended for years, and neither did a single new feature come through, with the solitary exception of a CAPTCHA filter in a feeble attempt to plug the ever-increasing amount of spam. Even the MediaWiki software running the site was, until very recently, stuck on version 1.11, five years and a full eight point releases behind Wikipedia. Unsurprisingly, the once active community started to fade away, with all of Wikitravel’s statistics (Alexa rank, page views, new articles, edits) slowly flatlining.

By 2012, with various feeble ultimatums ignored by IB and no other way out in sight, the 40-odd admins of the site got together and decided to fork. After a short debate and a few feelers sent out in various directions, unanimous agreement was reached that jumping ship to the Wikimedia Foundation (WMF) was the way to go, with Wikivoyage also happy to join in. Reaction on the Wikimedia side was almost as positive, and as I type this the birth of a new, truly free travel wiki appears to be only weeks away. (Sign up here to be notified when it is!)

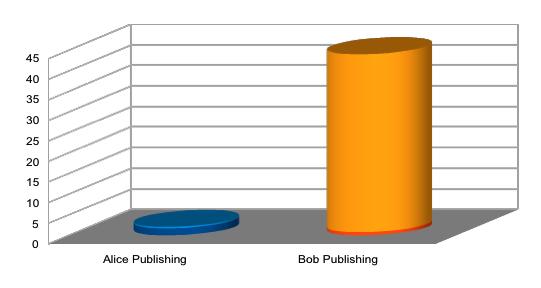

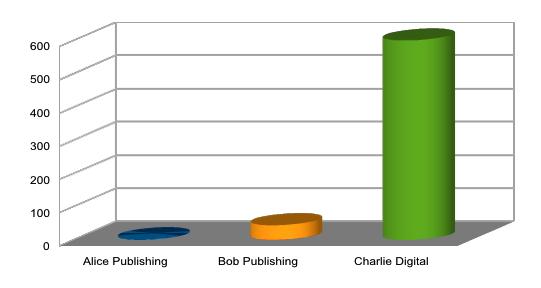

The natural question is thus, which of the two forks will win? Internet Brands has triggered many a community revolt before, but the track record of those revolts is distinctly mixed. QuattroWorld has found a stable user base but is still below AudiWorld in traffic rank; Cubits.org did not put a dent in Dave’s Garden; and the jury is still out on FlyerTalk vs MilePoint, but FlyerTalk retains a commanding lead.

The natural question is thus, which of the two forks will win? Internet Brands has triggered many a community revolt before, but the track record of those revolts is distinctly mixed. QuattroWorld has found a stable user base but is still below AudiWorld in traffic rank; Cubits.org did not put a dent in Dave’s Garden; and the jury is still out on FlyerTalk vs MilePoint, but FlyerTalk retains a commanding lead.

Nevertheless, in Wikitravel’s case, I feel confident in predicting the answer: the new fork will win, by a mile. Many of the reasons are clear — Wikitravel’s license allows copying all the content, nearly all editors and admins will jump ship, and the Foundation’s technical skills in running MediaWiki are second to none — but one takes some explaining.

The primary reason Wikitravel shows up so well in Google results is that it is linked from nearly every article about a place in Wikipedia. Now, ordinary garden-variety links from Wikipedia to other sites are ignored completely by Google, because they have the magic anti-spam rel=nofollow attribute set. However, Wikitravel is one of a very few sites that are linked through an obscure feature called “interwiki links“, which do not have that attribute set, and are thus counted in full by Google when it computes the importance of pages. Thus, the moment those links are changed to point to the new fork — and all it will take is one edit of this page — the new site will be propelled to Google fame and Wikitravel.org will begin its inexorable descent to Internet obscurity.

The final question thus presents itself: How will Internet Brands react? We have some clues already: as soon as they twigged on, they simultaneously pleaded that everybody return to their grandmotherly embrace, tried to spin the fork as a “self-destructive” rogue admin coup against a Nixonesque “silent supermajority”, and attempted to censor discussion on Wikitravel itself. When these attempts unsurprisingly fell flat, the phone lines started ringing, with head honcho Bob “Passion to Mission” Brisco calling up the WMF with promises of “innovative collaboration” if only they can keep their sticky fingers in the pie.

The final question thus presents itself: How will Internet Brands react? We have some clues already: as soon as they twigged on, they simultaneously pleaded that everybody return to their grandmotherly embrace, tried to spin the fork as a “self-destructive” rogue admin coup against a Nixonesque “silent supermajority”, and attempted to censor discussion on Wikitravel itself. When these attempts unsurprisingly fell flat, the phone lines started ringing, with head honcho Bob “Passion to Mission” Brisco calling up the WMF with promises of “innovative collaboration” if only they can keep their sticky fingers in the pie.

From Wikitravel’s point of view, it would obviously be best if Internet Brands cheerfully admitted defeat and handed over the domain and trademark to the WMF, which would avoid the necessity for a messy renaming. However, having followed the (private) discussion from the sidelines for a few days now, Internet Brands insists on keeping full control of the site and minting advertising money, and all they want from the WMF is a seal of approval, paid for with a slice of the loot. The non-profit Foundation, on the other hand, aims simply to freely share knowledge and has a long-standing aversion to advertising, so all they are able to offer is an easy way out from what will otherwise be a PR disaster. I’d still like to hope a deal can be done, but quite frankly, the gap between these two positions does not look bridgeable at the moment.

The other extreme is that Internet Brands tries to prevent or sabotage the fork via legal action, as they did in the vBulletin vs XenForo case that’s apparently still rumbling through the courts. I think this is even more unlikely though: all they own is the Wikitravel trademark and domain, so as long as the new (and presently undecided) name is sufficiently dissimilar, they will not have a legal leg to stand on. Unlike the XenForo case, there are no employees jumping ship, the software is open source, and the content itself is Creative Commons licensed and can be copied at will.

The most likely option is thus status quo: IB will keep doing the only thing it can, squeezing every last drop of revenue from visitors venturing in, and probably turning up the infomercial volume to 11. But with the community soon to turn into a ghost town, and increasing numbers of spammers and vandals dropping in to trash the place with nobody left to clean up after them, they will probably have to disable editing sooner or later, and Wikitravel.org the site will die a slow, ignominious death.

It remains to be seen if the new travel guide can succeed among a broader public: travel information online and collaborative writing have both moved on since 2003, and there are still unresolved problems with asking users to write and agree on fundamentally subjective content. But the new Wikitravel will remain the world’s largest open travel information site for the foreseeable future, and will certainly give the closed competition a run for their money. Wikitravel is dead, long live Wikitravel!

It remains to be seen if the new travel guide can succeed among a broader public: travel information online and collaborative writing have both moved on since 2003, and there are still unresolved problems with asking users to write and agree on fundamentally subjective content. But the new Wikitravel will remain the world’s largest open travel information site for the foreseeable future, and will certainly give the closed competition a run for their money. Wikitravel is dead, long live Wikitravel!

To register your support or opposition to the fork proposal, please head to the Request for Comment on the Wikimedia Meta site. Translations of the RFC into other languages are particularly welcome.

The RFC is expected to run until the end of August, with a formal decision and the launch of the new site to follow soon thereafter. To be notified if and when the new site it goes live, please sign up at this form. You will receive a single mail, and your e-mail address will then be thrown away.

Update: On September 5, the Wikimedia Foundation officially announced that they will proceed with the fork, and contrary to my optimistic prediction, Internet Brands is suing everyone left, right, and center. See follow-up post.

Update 2: The new site, called Wikivoyage, was launched on January 15, 2013 and is already better than Wikitravel ever was.